Architectural Quality Attributes

I. Usability

This is the definition of the usability attributes that are required from the business. This addresses the complexity/simplicity of use.

II. Modifiability

This attribute defines the maintainability and modifiability of this feature/functionality. Considerations to the ease of making changes all the way to the availability of resources with the technical knowledge to support the feature/functionality are addressed here.

III. Performance

This attributes defines the performance qualities for this feature/functionality. Response times, processing times, etc. should be considered.

IV. Availability

This attribute defines what qualities pertain to how available this feature/functionality must be to users and other systems. Failover, recovery times, etc. would be considered here.

V. Security

This attribute defines the security qualities that define this feature/functionality. Elements as in protecting access, data, etc. should be considered.

VI. Testability

This attribute defines the quality attributes for testing this feature/function. Ease of testing – including Unit as well as QA are considered. Special attention to quality controls like test automation, heartbeats, dashboards that self test are also included in these considerations.

VII. Scalability

This attribute defines how this feature/functionality scales. Overall capacity should be considered at this point including future growth.

VIII. Portability

This attribute defines the portability of this functionality – Cross Plattform functionality as well as maintaining a light interdependence on common vendor functdionality (i.e.WebServers: JBoss vs Weblogic &/or WebSphere).

IX. Business Qualities

A. Time

This attribute defines the time constraints to deliver this functionality..

B. Cost (CBA)

This attribute defines the cost benefit of this functionality.

C. Market

This attribute defines the market segmentation of this functionality. Since we aren’t really managing to a Product Line, I’m OK with Omitting this in our definitions of architecture.

D. Integration

This attribute discusses the system integration of this functionality to other systems

E. Lifetime

This attribute defines the expected time this product is expected to exist, iterations that are expected to impact it, etc.

The 4 building blocks of Architecting Systems for Scale

A quick gloss on the building blocks:

In this post I'll attempt to document some of the scalability architecture lessons learned while working on systems at Yahoo! and Digg.

I've attempted to maintain a color convention for diagrams in this post:

Load Balancing: Scalability & Redundancy

The ideal system increases capacity linearly with adding hardware. In such a system, if you have one machine and add another, your capacity would double. If you had three and you add another, your capacity would increase by 33%. Let's call this horizontal scalability.

On the failure side, an ideal system isn't disrupted by the loss of a server. Losing a server should simply decrease system capacity by the same amount it increased overall capacity when it was added. Let's call this redundancy.

Both horizontal scalability and redundancy are usually achieved via load balancing.

(This article won't address vertical scalability, as it is usually an undesirable property for a large system, as there is inevitably a point where it becomes cheaper to add capacity in the form on additional machines rather than additional resources of one machine, and redundancy and vertical scaling can be at odds with one-another.)

Load balancing is the process of spreading requests across multiple resources according to some metric (random, round-robin, random with weighting for machine capacity, etc) and their current status (available for requests, not responding, elevated error rate, etc).

Load needs to be balanced between user requests and your web servers, but must also be balanced at every stage to achieve full scalability and redundancy for your system. A moderately large system may balance load at three layers: from the

Smart Clients

Adding load-balancing functionality into your database (cache, service, etc) client is usually an attractive solution for the developer. Is it attractive because it is the simplest solution? Usually, no. Is it seductive because it is the most robust? Sadly, no. Is it alluring because it'll be easy to reuse? Tragically, no.

Developers lean towards smart clients because they are developers, and so they are used to writing software to solve their problems, and smart clients are software.

With that caveat in mind, what is a smart client? It is a client which takes a pool of service hosts and balances load across them, detects downed hosts and avoids sending requests their way (they also have to detect recovered hosts, deal with adding new hosts, etc, making them fun to get working decently and a terror to get working correctly).

Hardware Load Balancers

The most expensive--but very high performance--solution to load balancing is to buy a dedicated hardware load balancer (something like a Citrix NetScaler). While they can solve a remarkable range of problems, hardware solutions are remarkably expensive, and they are also "non-trivial" to configure.

As such, generally even large companies with substantial budgets will often avoid using dedicated hardware for all their load-balancing needs; instead they use them only as the first point of contact from user requests to their infrastructure, and use other mechanisms (smart clients or the hybrid approach discussed in the next section) for load-balancing for traffic within their network.

Software Load Balancers

If you want to avoid the pain of creating a smart client, and purchasing dedicated hardware is excessive, then the universe has been kind enough to provide a hybrid approach: software load-balancers.

HAProxy is a great example of this approach. It runs locally on each of your boxes, and each service you want to load-balance has a locally bound port. For example, you might have your platform machines accessible via localhost:9000, your database read-pool at localhost:9001 and your database write-pool at localhost:9002. HAProxy manages healthchecks and will remove and return machines to those pools according to your configuration, as well as balancing across all the machines in those pools as well.

For most systems, I'd recommend starting with a software load balancer and moving to smart clients or hardware load balancing only with deliberate need.

Caching

Load balancing helps you scale horizontally across an ever-increasing number of servers, but caching will enable you to make vastly better use of the resources you already have, as well as making otherwise unattainable product requirements feasible.

Caching consists of: precalculating results (e.g. the number of visits from each referring domain for the previous day), pre-generating expensive indexes (e.g. suggested stories based on a user's click history), and storing copies of frequently accessed data in a faster backend (e.g. Memcache instead of PostgreSQL.

In practice, caching is important earlier in the development process than load-balancing, and starting with a consistent caching strategy will save you time later on. It also ensures you don't optimize access patterns which can't be replicated with your caching mechanism or access patterns where performance becomes unimportant after the addition of caching (I've found that many heavily optimized Cassandra applications are a challenge to cleanly add caching to if/when the database's caching strategy can't be applied to your access patterns, as the datamodel is generally inconsistent between the Cassandra and your cache).

Application Versus Database Caching

There are two primary approaches to caching: application caching and database caching (most systems rely heavily on both).

Application caching requires explicit integration in the application code itself. Usually it will check if a value is in the cache; if not, retrieve the value from the database; then write that value into the cache (this value is especially common if you are using a cache which observes the least recently used caching algorithm). The code typically looks like (specifically this is a read-through cache, as it reads the value from the database into the cache if it is missing from the cache):

key = "user.%s" % user_id

user_blob = memcache.get(key)

if user_blob is None: user = mysql.query("SELECT * FROM users WHERE user_id=\"%s\"", user_id)

if user:

memcache.set(key, json.dumps(user))

return user

else:

return json.loads(user_blob)

The other side of the coin is database caching.

When you flip your database on, you're going to get some level of default configuration which will provide some degree of caching and performance. Those initial settings will be optimized for a generic usecase, and by tweaking them to your system's access patterns you can generally squeeze a great deal of performance improvement.

The beauty of database caching is that your application code gets faster "for free", and a talented DBA or operational engineer can uncover quite a bit of performance without your code changing a whit (my colleague Rob Coli spent some time recently optimizing our configuration for Cassandra row caches, and was succcessful to the extent that he spent a week harassing us with graphs showing the I/O load dropping dramatically and request latencies improving substantially as well).

In Memory Caches

The most potent--in terms of raw performance--caches you'll encounter are those which store their entire set of data in memory. Memcached andRedis are both examples of in-memory caches (caveat: Redis can be configured to store some data to disk). This is because accesses to RAM are orders of magnitudefaster than those to disk.

On the other hand, you'll generally have far less RAM available than disk space, so you'll need a strategy for only keeping the hot subset of your data in your memory cache. The most straightforward strategy is least recently used, and is employed by Memcache (and Redis as of 2.2 can be configured to employ it as well). LRU works by evicting less commonly used data in preference of more frequently used data, and is almost always an appropriate caching strategy.

Content Distribution Networks

A particular kind of cache (some might argue with this usage of the term, but I find it fitting) which comes into play for sites serving large amounts of static media is the content distribution network.

CDNs take the burden of serving static media off of your application servers (which are typically optimzed for serving dynamic pages rather than static media), and provide geographic distribution. Overall, your static assets will load more quickly and with less strain on your servers (but a new strain of business expense).

In a typical CDN setup, a request will first ask your CDN for a piece of static media, the CDN will serve that content if it has it locally available (HTTP headers are used for configuring how the CDN caches a given piece of content). If it isn't available, the CDN will query your servers for the file and then cache it locally and serve it to the requesting user (in this configuration they are acting as a read-through cache).

If your site isn't yet large enough to merit its own CDN, you can ease a future transition by serving your static media off a separate subdomain (e.g. static.example.com) using a lightweight HTTP server like Nginx, and cutover the DNS from your servers to a CDN at a later date.

Cache Invalidation

While caching is fantastic, it does require you to maintain consistency between your caches and the source of truth (i.e. your database), at risk of truly bizarre applicaiton behavior.

Solving this problem is known as cache invalidation.

If you're dealing with a single datacenter, it tends to be a straightforward problem, but it's easy to introduce errors if you have multiple codepaths writing to your database and cache (which is almost always going to happen if you don't go into writing the application with a caching strategy already in mind). At a high level, the solution is: each time a value changes, write the new value into the cache (this is called a write-through cache) or simply delete the current value from the cache and allow a read-through cache to populate it later (choosing between read and write through caches depends on your application's details, but generally I prefer write-through caches as they reduce likelihood of a stampede on your backend database).

Invalidation becomes meaningfully more challenging for scenarios involving fuzzy queries (e.g if you are trying to add application level caching in-front of a full-text search engine likeSOLR), or modifications to unknown number of elements (e.g. deleting all objects created more than a week ago).

In those scenarios you have to consider relying fully on database caching, adding aggressive expirations to the cached data, or reworking your application's logic to avoid the issue (e.g. instead of DELETE FROM a WHERE..., retrieve all the items which match the criteria, invalidate the corresponding cache rows and then delete the rows by their primary key explicitly).

Off-Line Processing

As a system grows more complex, it is almost always necessary to perform processing which can't be performed in-line with a client's request either because it is creates unacceptable latency (e.g. you want to want to propagate a user's action across a social graph) or it because it needs to occur periodically (e.g. want to create daily rollups of analytics).

Message Queues

For processing you'd like to perform inline with a request but is too slow, the easiest solution is to create a message queue (for example, RabbitMQ). Message queues allow your web applications to quickly publish messages to the queue, and have other consumers processes perform the processing outside the scope and timeline of the client request.

Dividing work between off-line work handled by a consumer and in-line work done by the web application depends entirely on the interface you are exposing to your users. Generally you'll either:

Scheduling Periodic Tasks

Almost all large systems require daily or hourly tasks, but unfortunately this seems to still be a problem waiting for a widely accepted solution which easily supports redundancy. In the meantime you're probably still stuck with cron, but you could use the cronjobs to publish messages to a consumer, which would mean that the cron machine is only responsible for scheduling rather than needing to perform all the processing.

Does anyone know of recognized tools which solve this problem? I've seen many homebrew systems, but nothing clean and reusable. Sure, you can store the cronjobs in a Puppetconfig for a machine, which makes recovering from losing that machine easy, but it would still require a manual recovery, which is probably acceptable but not quite perfect.

Map-Reduce

If your large scale application is dealing with a large quantity of data, at some point you're likely to add support for map-reduce, probably using Hadoop, and maybeHive or HBase.

Adding a map-reduce layer makes it possible to perform data and/or processing intensive operations in a reasonable amount of time. You might use it for calculating suggested users in a social graph, or for generating analytics reports.

For sufficiently small systems you can often get away with adhoc queries on a SQL database, but that approach may not scale up trivially once the quantity of data stored or write-load requires sharding your database, and will usually require dedicated slaves for the purpose of performing these queries (at which point, maybe you'd rather use a system designed for analyzing large quantities of data, rather than fighting your database).

Platform Layer

Most applications start out with a web application communicating directly with a database. This approach tends to be sufficient for most applications, but there are some compelling reasons for adding a platform layer, such that your web applications communicate with a platform layer which in turn communicates with your databases.

First, separating the platform and web application allow you to scale the pieces independently. If you add a new API, you can add platform servers without adding unnecessary capacity for your web application tier. (Generally, specializing your servers' role opens up an additional level of configuration optimization which isn't available for general purpose machines; your database machine will usually have a high I/O load and will benefit from a solid-state drive, but your well-configured application server probably isn't reading from disk at all during normal operation, but might benefit from more CPU.)

Second, adding a platform layer can be a way to reuse your infrastructure for multiple products or interfaces (a web application, an API, an iPhone app, etc) without writing too much redundant boilerplate code for dealing with caches, databases, etc.

Third, a sometimes underappreciated aspect of platform layers is that they make it easier to scale an organization. At their best, a platform exposes a crisp product-agnostic interface which masks implementation details. If done well, this allows multiple independent teams to develop utilizing the platform's capabilities, as well as another team implementing/optimizing the platform itself.

I had intended to go into moderate detail on handling multiple data-centers, but that topic truly deserves its own post, so I'll only mention that cache invalidation and data replication/consistency become rather interesting problems at that stage.

I'm sure I've made some controversial statements in this post, which I hope the dear reader will argue with such that we can both learn a bit. Thanks for reading!

Source: http://lethain.com/introduction-to-architecting-systems-for-scale/

The 7 stages of scaling web apps

Good presentation of the stages a typical successful website goes through:

Prime directive of scaling: Think Horizontally at every point in your architecture, not just at the web tier. You may not agree with everything, but there's a lot of useful advice. Here's a summary:

I’ve spent the last five years implementing and thinking about service oriented architectures. One of the core benefits of a service oriented approach is the promise of greatly enhanced scalability and redundancy. But to realise these benefits we have to write our services to be ‘scalable’. What does this mean?

There are two fundamental ways we can scale software: 'Vertically' or 'horizontally'.

Is Hibernate the best choice?

Is Hibernate the best choice? Or is the technical marketing of other ORM vendors lacking? Recently Jonathan Lehr posed a question on his blog: "Is Hibernate the best choice?", and this lead me to ask the same question.

Although, I tend to use Hibernate as my first choice, it would be nice to see some head to head comparisons of Hibernate vs. TopLink (pros and cons), Hibernate vs. OpenJPA, Hibernate vs. Cayenne, etc. Searching around finds that many of the comparison are pretty old and not very detailed or compelling.

Having used other ORM frameworks, I found that when something goes wrong with Hibernate, you can usually google and find an answer, and there are many books on Hibernate. In my experience, the other frameworks seemed to be a less well-worn path and it is harder to find answers to even common problems. This is not to say that Hibernate is better, but that it is a lot more popular. In the end, I use Hibernate because my clients use it, if my clients switched to TopLink or OpenJPA, then I would use them as well.

So this begs the question, if Hibernate works for you, you just might have something else to do, like implementing a client solution that makes your client money, than to try several other ORM frameworks. How much time should someone spend learning a new ORM framework (new to them anyway)?

Don't get this wrong, trying out new ORM frameworks is fine. If there is a large IT/developer organization, and you have a certain selection criteria like integrating with legacy databases, conformance to JPA specification, ability to hire new developers, easy of use, etc. then by all means having someone create a few prototypes and/or proofs of concepts and try out a few ORM frameworks is great. There is often good ROI in this type of testing. Perhaps share your findings with the rest of us.

However, it seems if you are a vendor of a JPA solution, you could start by pointing out how your product differs from Hibernate. Like it or not, Hibernate dominates the mind-share of developers. If you can't prove your ORM frameworks has compelling reasons for switching, why should developers spend their time evaluating your product?

Now let me boil things down to brass tacks, it seems vendors of the ORMs should write white-papers, articles, blogs, and such to highlight the advantages of their ORM framework versus Hibernate. Logic dictates that if you have a product and there is a competing product that dominates the market that you might want to highlight what differentiates your product from the dominate one.

As a test, let's go to different vendor sites and see if they have comparisons of their ORM framework vs. the 800 pound gorilla, Hibernate.

So first let's go to Oracle TopLink website, you would expect since Hibernate has such a huge adoption rate in the industry that Oracle would want to point out why TopLink is better like a nice white-paper perhaps featured prominently on their TopLink site (see graph).

After hunting around a bit this entry appeared in the TopLink Essentials FAQ, Why should TopLink Essentials be used instead of JBoss(TM) Hibernate?

The two main points that seemed intriguing were as follows:

"Customers with any degree of complexity in the domain model or relational schemas, most notably where changing the schema is not an option, will benefit from the flexibility and proven nature of TopLink."

NOTE: At times mapping Hibernate to legacy systems can be challenging. How is TopLink better at this? Are there articles or white-papers, etc. that attempt to prove that TopLink is better at legacy integration? (I find that many developers are not aware of all of the features that Hibernate provides for legacy mapping.)

"As the reference implementation of JPA TopLink offers the first certified implementation of this new standard. as well as providing some useful value-add functionality. Going forward this open source project will continue to innovate based on contributions from Oracle, Sun, and others."

NOTE: This is compelling to me since I now use the JPA interface to Hibernate whenever I can.

Now I did not find the arguments in the FAQ particularly compelling or at all detailed. Sadly, you can find more compelling arguments in some of the TopLink public forums and random blogs. However, none so compelling that I feel the sudden need to switch.

Now on to the BEA site to look at dear KODO. I have always heard good things about KODO. Sadly, I found the BEA KODO site to be very out of date. It mentions a 2005 award for KODO as the lead news item. It also mentions that OpenJPA is in incubation, it has been out for a while. Even the FAQ, which did mention Hibernate, merely mentions that Hibernate is not EJB3 (seems it should say Hibernate is not JPA). This site really seems out of date and like the TopLink site mosty ignores the elephant in the room (see graph).

Well, let's look at the Apache OpenJPA site, as KODO's DNA may live at Apache long after Oracle decides on a single JPA solutions and likely leaves KODO to rot on the vine. Searching through the main site, FAQ, OpenJPA documentation, etc., I find no mention of Hibernate. Now this is an open source project so one would likely expect to see no marketing angle per se. But, you might expect that a project recognize that many would not be able to use this project without first justifying their pick against picking Hibernate (OpenJPA barely appears at all on job graphs). How many IT/development managers will feel comfortable with this choice without some explanation?

Now on to the next ORM framework site, Cayenne. No mention of Hibernate vs. Cayenne (but I know I have read articles on this). Seems like there might be some compelling ease-of-use arguments for Cayenne vs. Hibernate but they choose not to compare them. (Cayenne barely appears at all on job graphs)

Now back to Jonathan Lehr blog, Jonathan states that he feels TopLink and Cayenne are better choices than Hibernate and cites his reasons for these choices. There is a long discussion on the pros and cons of each in the comment section. I'd love to see more discussion, and I'd love to see some viable alternatives to Hibernate, but feel that no vendor or open source project does a real good job of pointing out the differences and possible limitations of Hibernate. If the vendors and project owners choose not to make their case, it makes it very difficult for the rank and file developers to make their case.

Perhaps one reason Hibernate is so dominate is because competing projects are so bad at technical marketing. Not one project I looked at mentions Hibernate on their front page. I could not find a decent comparison of features (to Hibernate's) on any of the ORM sites.

Has anyone done a comparison of Hibernate and OpenJPA, TopLink Essentials, Cayenne that compares ease-of-use, caching, tool support, legacy integration, etc.? Perhaps such an internal report was used to decide which ORM tool to pick. If so, what were the results?

If you use TopLink, OpenJPA, Cayenne instead of Hibernate, why?

Were you hoping that JPA would level the playing field and there would be more competition?

Cassandra Adds Hadoop MapReduce

Today the Cassandra project announced its first new release since becoming a Top-Level Project at Apache. Don't let the low version number fool you. Cassandra 0.6 is one of the most mature NoSQL distributed data stores in the open source market. It was heavily developed by Facebook before it was open sourced in August 2008. Currently Cassandra is being used by four of the largest social media sites in the world: Facebook, Digg, Reddit, and Twitter.

One of the primary new features in Cassandra 0.6 is support for Apache Hadoop. This is a major upgrade for Cassandra, giving it even more "big data" capabilities. The new feature will allow Cassandra to run analytics against its own data using Hadoop's reliable MapReduce framework.

Cassandra 0.6 simplifies its architecture with a new integrated caching row. With the implementation of this new feature, Cassandra no longer needs a separate caching layer. Along with the simplified architecture, Cassandra 0.6 also features a performance boost. The distributed data store can already process thousands of writes per second, and this version's enhancements builds on that number.

"Apache Cassandra 0.6 is 30% faster across the board, building on our already-impressive speed," said Jonathan Ellis, Apache Cassandra Project Management Committee Chair in the press release. "It achieves scale-out without making the kind of design compromises that result in operations teams getting paged at 2 AM." The Storage Team Technical Lead at Twitter, Ryan King, explained Twitter's reasons for using Cassandra: "At Twitter, we're deploying Cassandra to tackle scalability, flexibility and operability issues in a way that's more highly available and cost effective than our current systems."

One of Cassandra's best known features is its lack of any single point of failure. The data store's distributed system smoothly replaces any node that goes down with a new node. The system also has the flexibility to be tuned for more consistency or more availability.

The previous version of Cassandra (0.5) added load balancing and significantly improved bootstrap and concurrency. New tools were also added, including JSON-based data import and export, new JMX metrics, and an improved command line interface. “It's fantastic seeing the Project's community at the ASF grow to match the promise of the technology," said Ellis.

You can download Cassandra 0.6 now on the project's website. For more info on Cassandra, check out "4 Months with Cassandra, a love story."

Cache selection(POC) for one of my product

Introduction

The purpose of this document is to define a new cache implementation for one of my product. This new cache implementation is proposed to improve the scalability, manageability, and distribution of cache items in the my Platform.

Current Cache Issues

The following sections define the most critical problems with the current cache implementation of which the proposed new cache solution will address.

Multiple Cache Copies

The current cache implementation is designed such that a complete copy of the cache exists on every application server in which our Product is deployed. With this design, as clients and data are added to the cache in an instance of a our platform product the amount of memory taken up by the cache increases linearly with the amount of data added to it. The result of this increase in memory per client and data item added takes away from application server memory thus limiting the overall scalability of the platform. As memory increases due to new clients and data, adding application server machines to handle increased load has less and less of an effect because of the amount of memory taken away from the application itself by the cache.

As clients (and products) are added to the system the amount of memory allocated by the cache increases until adding machines to the cluster to handle performance load (CPU) issues has no effect due to memory constraints and a new separate application cluster would need to be created. Once the client/application threshold is reached in terms of memory, the footprint of all the machines in the cluster will look like the 10 client footprint .

Cache Reload Time

Another side effect of having an instance of the cache in each application server is if an application server must be restarted or shut down for any reason the data in the cache is cleared. When the application is restarted the cached data must be reloaded from the database. The time to reload the cache can be considerable and will only increase as we add clients and data to the cache over time. The time it takes to reload the cache directly increases the amount of downtime required to make code or other modifications requiring a restart of the system. The reloading of the cache is especially time consuming in the current cache implementation since most of the items in the cache are loaded at startup instead of as-needed.

Cache Item Update Complexity

The current cache implementation also has the added complexity of updating other application servers participating in a our Product cluster when a cached item is modified. For example, if a cache item is modified in Machine 1 of a cluster of 5 Machines then the item must also be sent to the modified item must also be updated in caches of the remaining 4 machines. Up to this time, this distributed cache process has been problematic during restarts and there is no 100pct certainty that modified cache items are distributed properly to the other machines participating in the cluster.

Proposed Solution to the Current Cache Issues

In order to solve the issues of the current cache implementation, a distributed-hash based cache solution is proposed. In a distributed-hash based cache (DHBC) implementation the cache is extracted from the application servers and resides in a separate cluster of cache machines. The DHCB cluster acts as a single very large cache limited only by the total memory of all the machines participating in the cluster. So for example if there are four machines in the DHBC cluster each with 4GB of RAM then the DHBC cluster capacity will be 16GB minus whatever memory the operating system and required apps need.

The distributed-hash algorithm determines which cache cluster machine in which to connect. Once an item is added to the cache by a member of the application server cluster, the item is available to all members of the application server cluster without having to distribute it.

Cache Items in a DHBC

A cache item in a DHBC cluster exists only once in the cache. When an item is requested from the DHBC the distributed hash algorithm determines on which machine the cache item resides and returns the appropriate item if it exists. The distributed hash algorithm also determines on which machine to put a new cache item. As new machines are added to the DHBC cluster the distributed hash algorithm automatically adjusts itself and uses the new machine as part of the overall cache.

If a machine participating in a DHBC cluster goes down, the distributed hash algorithm is designed to adapt to the fact that a participating machine is down. Any new cached items will be added to the remaining servers and items that were in the downed server must be added again to the distributed cache.

Load on Demand Additionally, in order to be as fault-tolerant as possible the cache strategy must be changed from a “load once at startup and update” strategy to a “load on demand” strategy. If a “load on demand” strategy is employed by the application programmers then the application itself will be insulated from issues that may arise if any machines participating in the DHBC cluster fail. A “load on demand” strategy means if item already exists in the cache when requested then return it, otherwise add it to the cache so new requests for the cached item will have it. In this case, if a machine participating in the DHBC cluster fails and a cached item is not returned then simply add it again to the cache. The only issue will be a slight performance hit when the cached items in the failed machine are reloaded into the cache as they are requested.

Cache Updates

Cache updates are much less complex in a DHBC implementation than the existing cache solution. If an item is added to the cache or replaced with a new value the item is automatically available to any application that requests it. The item does not need to be distributed to other servers in the cluster (application or otherwise).

Effects on Application Reload Time

Since the items in a DHBC cluster do not reside within the application server, the application servers can be stopped and restarted without affecting the cache. So, if an application server needs to be restarted because of a code change or other maintenance the application server can be started much more quickly since the cache does not need to be reloaded. Additionally, the newly started server will have access to any new items that were added or updated to the cache while the server application server was down for maintenance.

Implementing the DHBC

In order to implement the DHBC it is required to have a DHBC implementation itself and a client from which to access the HDBC cluster. For this purpose it is recommended to use the Linux based memcached implementation. Note that the client itself is available in most languages and platforms and does need to run on a Linux platform.

Memcached

Memcached is commonly available for most Linux distributions and has been used extensively by Facebook and many other websites as well as the MySQL database and Hibernate database library to provide scalable easy to use cache implementations. Memcached and the clients that use it are all open sourced so we can acquire the source code and modify it if necessary.

Information on memcached can be acquired from the following web site:

http://www.danga.com/memcached/

The list of memcached clients that can be used for each programming language is found here:

http://code.google.com/p/memcached/wiki/Clients

Sample code for each of the clients can be acquired from the above web site. However, for initial testing I have used the spymemcached client listed on the above web site and some sample code of my own for it be needed.

Hash Key The most important part of implementing the DHCB will be the choice of hash key. The hash key is used to uniquely identify a hash item in the DHCB. I recommend a key like the following:

< DISCRIMINATOR>.<APPLICATION>.<CLIENT>.<VALUE_KEY>

Where DISCRIMINATOR can be anything desired such as “TestEnvironment” or another grouping, APPLICATION is an application identifier such as “OurProduct”, CLIENT is either “ANY_CLIENT” or a specific client such as “WalMart”, and the VALUE_KEY can be anything appropriate.

For example if the DISCRIMINATOR is“JeffsTests”, the APPLICATION is “OurProduct”, the value is shared across clients, and the VALUE_KEY is page.login.firstNamethen the key would be “JeffsTests.OurProduct.ANY_CLIENT.page.login.userName”. The example key is for clarity but the key components can be abbreviated and shortened.

The <DISCRIMINATOR> in the key strategy allows multiple installations (or developers or testers or environments) to use the same memcached instance without interfering with each other.

This key strategy should be flexible enough for our present and future needs but can be enhanced as better ideas arise.

Memcached Environment

There is a test environment with the memcached server installed and already set up on two Ubuntu Linux servers. The IP addresses, user names, and passwords are available on request. It is necessary to download and install an SSH client to connect to and use the Linux servers from a windows desktop. A reasonable choice is the freely available putty (http://www.chiark.greenend.org.uk/~sgtatham/putty/) SSH client.

Implementation in Existing product

To implement memcached in the existing product it is recommended to create a switch value that can switch between memcached implementation and the existing design until developers, hosting, and others get used to working with memcached. The existing design can then be phased out whenever is appropriate.

In addition to the switch above, the cache strategy in the existing product should be changed to a “load on demand” strategy as defined in the Load on Demand section of this document. If, after implementing the“load on demand” strategy it is still required or desired to load very frequently and/or first used information into the cache then a routine to seed the cache by simply requesting keys for those items can be put in place.

It is required to implement an appropriate cache key strategy similarly to the strategy defined in the Hash Key section of this document.

Tools and utilities should also be created to check the number of keys, memory allocated, and other statistical information about the cache. The statistical tools should be compatible with the SiteMonitor program hosting uses to check for failures to ensure any production issues are quickly identified.

Additionally, routines should be created to allow for selective deletion and arbitrary viewing of items in the cache for maintenance reasons such as removing clients and debugging.

Testing

Since the cache implementation touches virtually every component of the our Product and related applications, once implementation in our product is completed a full functional regression test of the Applications making use of the cache needs to be performed. Additionally, the usual load tests should be performed as well to address any performance concerns.

Deployment

It is recommended that there be at least three memcached environments be deployed after implementation is complete. The three environments are Production, Staging, and QA/Development. QA and Development can make use of the< DISCRIMINATOR> value in the key to isolate key items from development and testing efforts. Another environment can be created if it is not desired to have QA and development using the same machines.

There should be at least two servers in the memcached cluster to allow for fault tolerance, complete testing, and scalability. In the production environment I recommend at least three servers to make sure that if a machine goes down there is ample room in the cluster to handle the cached items in the failed server. For example, if it takes 4GB to cache all the required data I would recommend three machines with at least 2GB of memory so the remaining clusters can handle the cache requirements without the third machine. It is also important to make sure the network connecting the application server machines and the DHBC cluster is a fast and fault tolerant as possible to avoid bottlenecks and performance issues.

More Possibilities

In addition to replacing the existing cache mechanism used by our product the DHBC can be used for other functions as well. Since the amount of memory available to a DHBC cluster is very large (some implementations have terabytes) depending on the capacity and number of machines in the cluster, the cache can be used to keep almost anything in memory.

One possible example might be to cache the complete list of candidates or jobs for an important client to get extreme performance and take load away from the database. Other possibilities include anything that can take the load off the database such as caching frequently searched result sets and expiring them after a certain amount of time. It also should be possible to cache frequently accessed assets (pdf files, other documents, user uploaded images, etc.) and dynamically generated html pages (if they don’t change much).

Since the DHBC is shared across applications it should be possible to use it as a session sharing mechanism or general utility to keep information available for shared application usage without hitting the database.

Once the cache is complete, available, and tested, more thought should be done to find new imaginative ways in which the cache can be leveraged to improve performance and scalability in our applications.

MemCachedVsEhCache

Overview

Two distributed cache servers; Ehcache (Distributed Caching With Terracotta) and Memcached were evaluated to determine which one better fits the needs of our product. They were evaluated in various areas including ease of configuration, API usage, and overall speed.

Hardware

Client Machine:

AMD Sempron 2800+

1.61. GHz, 2 GB RAM

Windows Server 2003, Service Pack 2

Server Machine:

Dell Optiplex 740

Intel Core 2 CPU 6700

2.66 GHz, 1 GB RAM

Windows Server 2003, Service Pack 2

Software

Memcached Software:

Server:memcached-x86 1.4.5 binary for Windows

Test Client:spymemcached 2.5

Ehcache Software:

Server:terracotta-3.4.0 (Single Server Array)

Test Client:Ehcache 2.3.1

Configuration

Both cache servers were relatively easy to setup. For Memcached, a windows 1.4.5 version binary (memcached.exe) was used (I can’t recall the source url). Running memcached –h gives a list of the available options. For this evaluation memcached –m was the only option used to specify the maximum amount of memory to allow. For Ehcache, Ehcache 2.3.1 (ehcache-core-2.3.1-distribution.tar.gz) along with Terracotta 3.4.0 (terracotta-3.4.0_1-installer.jar) was downloaded from http://ehcache.org. The Terracotta installer, invoked with java -jar terracotta-3.4.0_1-installer.jar was easy to follow. The server can be started using the bin\start-tc-server.bat script in the installation folder. In summary, both cache servers are relatively easy to configure. I would probably give the edge here to Ehcache simply because there is a wealth of options available and they are well documented. Additionally, Ehcache uses a configuration file (ehcache.xml), which allows for different cache configurations which are selected at runtime.

API Usage

Both API’s are relatively simple to use. Adding, retrieving and deleting elements is straightforward.

Ehcache

import net.sf.ehcache.Cache;

import net.sf.ehcache.CacheManager; import net.sf.ehcache.Element;

public class Ehcache implements ICache

{

CacheManager mCacheManager;

Cache mCache;

public void init() throws Exception

{

InputStream fis = new FileInputStream(new File(<config file>))

try

{

mCacheManager = new CacheManager(fis);

mCache = mCacheManager.getCache(<cache name>);

}

finally

{

fis.close();

}

}

public void put(String pKey,Object pValue) throws Exception

{

mCache.put(new Element(pKey,pValue)); }

public Object get(String pKey) throws Exception

{

Element lElement = mCache.get(pKey);

if (lElement != null)

return lElement.getObjectValue();

return null;

}

public void remove(String pKey) throws Exception

{

mCache.remove(pKey);

}

}

MemCached

import java.net.InetSocketAddress;

import java.util.Arrays;

import net.spy.memcached.MemcachedClient;

import com.kenexa.core.data.dhbc.system.memcached._DefaultConnectionFactory;

public class Memcached implements ICache

{

MemcachedClient mMemcachedClient;

public void init() throws Exception

{

String lHost = "192.168.10.101";

int lPort = 11211;

InetSocketAddress address = new InetSocketAddress(lHost,lPort);

mMemcachedClient = new MemcachedClient(new _DefaultConnectionFactory(), Arrays.asList(address));

}

public void put(String pKey,Object pValue) throws Exception

{

mMemcachedClient.set(pKey,3600,pValue);

}

public Object get(String pKey) throws Exception

{

return mMemcachedClient.get(pKey);

}

public void remove(String pKey) throws Exception

{

mMemcachedClient.delete(pKey);

}

}

Ehcache additionally provides the ability to iterate the keys in the cache and extract statistical and other information which can be used to implement custom analysis tools. For this reason, I would rate Ehcache’s API better.

Performance

In order to evaluate the performance of both cache servers, the add, retrieve and remove operations were compared.

Test 1: Add

In the first test, fixed sized random byte arrays were added to the cache and the time to complete each set in milliseconds was recorded. I started with Ehcache and added 1000 random byte arrays of 1K, 10K and 100K followed by 10000 arrays of 1K and 10K. Finally, 2500 100K and 500 500K arrays were added. Each test was ran three (3) times to ensure the results were valid and the server software was stopped and restarted for each test to allow for consistent results. I then repeated the same tests for Memcached. The median result for each test is posted below.

Result:

Memcached’s outperformed Ehcache in these tests. I had initially collected some baseline numbers by running the same tests using only the client machine to host both the test client and cache server and the results were consistent with using two servers.

Add 1000 1K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 4750

Memcached 781

Add 1000 10K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 5500

Memcached 2078

Add 1000 100K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 18547

Memcached 4735

Add 10000 1K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 28094

Memcached 9360

Add 10000 10K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 38718

Memcached 11094

Add 2500 100K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 44937

Memcached 12531

Add 500 500K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 35953

Memcached 14500

Test 2: Add – Remove

In this test, fixed sized random byte arrays were added and removed from the cache and the time to complete each set in milliseconds was recorded. I started with Ehcache and added/removed 1000 random byte arrays of 1K, 10K and 100K followed by 10000 arrays of 1K and 10K. Finally, 2500 100K and 500 500K arrays were added/removed. Each test was ran three (3) times to ensure the results were valid and the server software was stopped and restarted for each test to allow for consistent results. I then repeated the same tests for Memcached. The median result for each test is posted below.

Result:

Memcached’s performance was again faster in this scenario.

Add-Remove 1000 1K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 6047

Memcached 1204

Add-Remove 1000 10K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 6578

Memcached 2891

Add-Remove 1000 100K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 17109

Memcached 5516

Add-Remove 10000 1K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 33172

Memcached 15641

Add-Remove 10000 10K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 37828

Memcached 20875

Add-Remove 500 500K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 34500

Memcached 14468

Add-Remove 2500 100K Byte Arrays

Cache Server Time (milliseconds)

Ehcache 44766

Memcached 14141

Test 3: MultiThreaded Get for 15 minutes

In this test, 1000 100K random byte arrays were first loaded to each cache server. Ten (10) threads were then created in the test client and each thread repeatedly retrieved random elements from the cache. A checksum was included with the added data and used during retrieval to ensure that the data was valid. Each test ran for 15 minutes to see how many records could be retrieved in that time period. There was no delay between operations. The average retrieval time per thread is also included.

Result:

Ehcache

runGetTestThreaded(Ehcache,1000 elements,size[102400] - 10 threads : 900 secs)

Thread Thread[Thread-19,5,] count = 8096, Avg Get Time = 110.49085968379447

Thread Thread[Thread-20,5,] count = 8072, Avg Get Time = 110.78889990089198

Thread Thread[Thread-21,5,] count = 8188, Avg Get Time = 109.18075232046898

Thread Thread[Thread-22,5,] count = 8143, Avg Get Time = 109.89942281714356

Thread Thread[Thread-23,5,] count = 8097, Avg Get Time = 110.44646165246388

Thread Thread[Thread-24,5,] count = 8054, Avg Get Time = 111.07946362056121

Thread Thread[Thread-25,5,] count = 8144, Avg Get Time = 109.85940569744598

Thread Thread[Thread-26,5,] count = 8094, Avg Get Time = 110.57289350135903

Thread Thread[Thread-27,5,] count = 8040, Avg Get Time = 111.2957711442786

Thread Thread[Thread-28,5,] count = 8129, Avg Get Time = 110.07467093123385

Total Count = 81057

Memcached

runGetTestThreaded(Memcached,1000 elements,size[102400] - 10 threads : 900 secs

Thread Thread[Thread-1,5,] count = 9580, Avg Get Time = 93.30365344467641

Thread Thread[Thread-2,5,] count = 9579, Avg Get Time = 93.33312454327174

Thread Thread[Thread-3,5,] count = 9579, Avg Get Time = 93.35369036433866

Thread Thread[Thread-4,5,] count = 9579, Avg Get Time = 93.3253993109928

Thread Thread[Thread-5,5,] count = 9579, Avg Get Time = 93.35953648606326

Thread Thread[Thread-6,5,] count = 9581, Avg Get Time = 93.27439724454649

Thread Thread[Thread-7,5,] count = 9581, Avg Get Time = 93.29683749086735

Thread Thread[Thread-8,5,] count = 9581, Avg Get Time = 93.25988936436697

Thread Thread[Thread-9,5,] count = 9580, Avg Get Time = 93.27651356993736

Thread Thread[Thread-10,5,] count = 9580, Avg Get Time = 93.25490605427974

Total Count = 95799

Memcached performance was slightly faster in this test. As it terms out, Ehcache has an option maxElementsInMemory, which sets the maximum number of objects that will be created in memory. Setting this value to 1000 and restarting the test yields the following results.

runGetTestThreaded(Ehcache,1000 elements,size[102400] - 10 threads : 900 secs)

Thread Thread[Thread-19,5,] count = 188456, Avg Get Time = 0.06041728573247867

Thread Thread[Thread-20,5,] count = 187452, Avg Get Time = 0.06884429080511277

Thread Thread[Thread-21,5,] count = 188048, Avg Get Time = 0.046940142942227515

Thread Thread[Thread-22,5,] count = 189150, Avg Get Time = 0.0383187946074544

Thread Thread[Thread-23,5,] count = 186504, Avg Get Time = 0.05214365375541544

Thread Thread[Thread-24,5,] count = 191792, Avg Get Time = 0.06031534162008843

Thread Thread[Thread-25,5,] count = 187647, Avg Get Time = 0.046033243270609175

Thread Thread[Thread-26,5,] count = 190728, Avg Get Time = 0.04553605134012835

Thread Thread[Thread-27,5,] count = 187245, Avg Get Time = 0.03894897060001602

Thread Thread[Thread-28,5,] count = 187020, Avg Get Time = 0.04590418137097636

Total Count = 1884042

Almost 2 million results and there was no cpu activity on the server. What this means is that Ehcache when used with Terracotta as a distributed cache, has the ability to also provide a local store. This is something that I’ve been meaning to incorporate into the current framework but I hadn’t figured out the best way to keep the local cache in sync with the server. There are instances where we will not always want to go over the network to retrieve cache data so this is a feature we will want to leverage.

Conclusion

There are other factors not included in the above results. At times restarting the Memcached server (which I did a lot) proved problematic as the client would experience errors where I was not sure of the actual cause. I was able to solve these issues by stopping the server and waiting for about a minute or two. Sometimes I had to restart the machine. Some of my tests not mentioned above proved too much for Ehcache as the garbage collector could not keep up and the process would run out of memory whereas Memcached had no problems. Overall, Memcached performed better and was less resource and cpi intensive. Even so, I believe that Ehcache is the better option for our product. The ability to have a local store for the most recently used objects per cache (our cache) would allow for less initial code changes. For example, code that accesses the same object repeatedly in a loop could stay that way without heavily impacting the application. We would want to rewrite such logic eventually but we wouldn’t be forced to do so up front. Also, in terms of monitoring, there seems to be better tools available for Ehcache. I’ve only glanced over them but another advantage is that the API for Ehcache allows exploration into the cache itself (i.e. a listing of all available keys), whereas I don’t believe the Memcached API has any such functionality.

Refactoring Techniques

What is Refactoring anyways?

Refactoring is the process of changing a computer program's source code without modifying its external functional behavior in order to improve some of the non-functional attributes of the software. It is a disciplined technique for restructuring an existing body of code, altering its internal structure without changing its external behaviour. Advantages include improved code readability and reduced complexity to improve the maintainability of the source code, as well as a more expressive internal architecture or object model to improve extensibility.

Its heart is a series of small behavior preserving transformations. Each transformation (called a 'refactoring') does little, but a sequence of transformations can produce a significant restructuring. Since each refactoring is small, it's less likely to go wrong. The system is also kept fully working after each small refactoring, reducing the chances that a system can get seriously broken during the restructuring. By continuously improving the design of code, we make it easier and easier to work with. If you get into the hygienic habit of refactoring continuously, you'll find that it is easier to extend and maintain code.

Motivation for Refactoring

Refactoring is usually motivated by noticing a code smell. For example the method at hand may be very long, or it may be a near duplicate of another nearby method. Once recognized, such problems can be addressed by refactoring the source code, or transforming it into a new form that behaves the same as before but that no longer "smells". For a long routine, extract one or more smaller subroutines. Or for duplicate routines, remove the duplication and utilize one shared function in their place. Failure to perform refactoring can result in accumulating technical debt. There are two general categories of benefits to the activity of refactoring.

Before refactoring a section of code, a solid set of automatic unit tests is needed. The tests should demonstrate in a few seconds that the behaviour of the module is correct. The process is then an iterative cycle of making a small program transformation, testing it to ensure correctness, and making another small transformation. If at any point a test fails, you undo your last small change and try again in a different way. Through many small steps the program moves from where it was to where you want it to be. Proponents of extreme programming and other agile methodologies describe this activity as an integral part of the software development cycle.

List of refactoring techniques

Here is a very incomplete list of code refactorings. A longer list can be found in Fowler's Refactoring book and on Fowler's Refactoring Website.

Techniques that allow for more abstraction

Writing Effective Designs

Overview

As the software development is maturing, the HLDs and LLDs are widely accepted as an integrated part of software development cycle, no matter what software development methodology we use. Even though the need of having a design is accepted and 30% to 40% of the total development effort is spent on design, it is rare to see effective designs that lay strong foundation for the application development. Very often they fail miserably in providing guidance and clarity for the development teams to start with and as a result the actual implementations are miles apart from the original design. In fact, in many cases the only useful purpose design serves is to complete the list of deliverables for the project for its closure and audits.

Writing effective design is one way to prevent such situations. This tutorial attempts to suggest a step by step approach to develop and deliver effective designs that shall not only be followed and respected by developer community but also play key role in development of stable and robust application.

What is design?

Before we get into the details about writing effective design, it is important that we have the same understanding about what the design is that we are referring to here. Here we are essentially talking about HLDs and LLDs for software development which is an essential part of software development and should ideally be available before the start of development face of the project.

What is an HLD?

Why we need HLD?

It is important for the management to be able to quantify effectiveness of a design. Following are a few signs that a good manager should always be looking at to find out if the designs are effective or not.

Characteristics of an ineffective HLD

Following are some signs for ineffective HLD

Following are some signs for ineffective LLD

Problems with HLD

It is equally important to for the delivery management to know if the design is effective as it is to know if it is not. It is management’s responsibility to not only improve the inefficiencies but also to encourage and carry forward and continue with the best practices and good design methodologies that are being followed.

Characteristics of an effective HLD

So far we have discussed the characteristics of an effective as well as an ineffective design and also the importance of the design and why do we need it. Now it is time that we should get our hands dirty with the real stuff “Writing effective design”. Here we shall discuss the prerequisites and the step by step approach to create effective HLDs and LLDs for a project.

Prerequisites for an effective HLD

Apart from all the prerequisites for HLD, the LLD designer should ensure that following artifacts are available before he/she starts with the LLD

Web Site Scalability

A classical large scale web site typically have multiple data centers in geographically distributed locations. Each data center will typically have the following tiers in its architecture

Content Delivery

Dynamic Content

There are 2 layers of dispatching for a Client who is making an HTTP request to reach the application server

DNS Resolution based on user proximity

This is concerned about designing an effective mechanism to communicate with the client, which is typically the browser making some HTTP call (maybe AJAX as well)

Designing the granularity of service call

Typical web transaction involves multiple steps. Session state need to be maintained across multiple interactions

Memory-based session state with Load balancer affinity

Remember the previous result can reuse them for future request can drastically reduce the workload of the system. But don't cache request which modifies the backend state.

Source : http://horicky.blogspot.in/2008/03/web-site-scalability.html

Database Scalability

Database is typically the last piece of the puzzle of the scalability problem. There are some common techniques to scale the DB tire

Indexing

Make sure appropriate indexes is built for fast access. Analyze the frequently-used queries and examine the query plan when it is executed (e.g. use "explain" for MySQL). Check whether appropriate index exist and being used.

Data De-normalization

Table join is an expensive operation and should be reduced as much as possible. One technique is to de-normalize the data such that certain information is repeated in different tables.

DB Replication

For typical web application where the read/write ratio is high, it will be useful to maintain multiple read-only replicas so that read access workload can be spread across. For example, in a 1 master/N slaves case, all update goes to master DB which send a change log to the replicas. However, there will be a time lag for replication.

Table Partitioning

You can partition vertically or horizontally.

Vertical partitioning is about putting different DB tables into different machines or moving some columns (rarely access attributes) to a different table. Of course, for query performance reason, tables that are joined together inside a query need to reside in the same DB.

Horizontally partitioning is about moving different rows within a table into a separated DB. For example, we can partition the rows according to user id. Locality of reference is very important, we should put the rows (from different tables) of the same user together in the same machine if these information will be access together.

Transaction Processing

Avoid mixing OLAP (query intensive) and OLTP (update intensive) operations within the same DB. In the OLTP system, avoid using long running database transaction and choose the isolation level appropriately. A typical technique is to use optimistic business transaction. Under this scheme, a long running business transaction is executed outside a database transaction. Data containing a version stamp is read outside the database trsnaction. When the user commits the business transaction, a database transaction is started at that time, the lastest version stamp of the corresponding records is re-read from the DB to make sure it is the same as the previous read (which means the data is not modified since the last read). Is so, the changes is pushed to the DB and transaction is commited (with the version stamp advanced). In case the version stamp is mismatched, the DB transaction as well as the business transaction is aborted.

Object / Relational Mapping

Although O/R mapping layer is useful to simplify persistent logic, it is usually not friendly to scalability. Consider the performance overhead carefully when deciding to use O/R mapping.

There are many tuning parameters in O/R mapping. Consider these ...

Scalable System Design

Building scalable system is becoming a hotter and hotter topic. Mainly because more and more people are using computer these days, both the transaction volume and their performance expectation has grown tremendously.

This one covers general considerations. I have another blogs with more specific coverage on DB scalability as well as Web site scalability.

General Principles

"Scalability" is not equivalent to "Raw Performance"

Common Techniques

Server Farm (real time access)

Scalable System Design Patterns

Load Balancer

In this model, there is a dispatcher that determines which worker instance will handle the request based on different policies. The application should best be "stateless" so any worker instance can handle the request.

Scatter and Gather

In this model, the dispatcher multicast the request to all workers of the pool. Each worker will compute a local result and send it back to the dispatcher, who will consolidate them into a single response and then send back to the client.

Result Cache

In this model, the dispatcher will first lookup if the request has been made before and try to find the previous result to return, in order to save the actual execution.

Shared Space

This model also known as "Blackboard"; all workers monitors information from the shared space and contributes partial knowledge back to the blackboard. The information is continuously enriched until a solution is reached.

Pipe and Filter

This model is also known as "Data Flow Programming"; all workers connected by pipes where data is flow across.

Map Reduce

The model is targeting batch jobs where disk I/O is the major bottleneck. It use a distributed file system so that disk I/O can be done in parallel.

Bulk Synchronous Parellel

This model is based on lock-step execution across all workers, coordinated by a master. Each worker repeat the following steps until the exit condition is reached, when there is no more active workers.

This model is based on an intelligent scheduler / orchestrator to schedule ready-to-run tasks (based on a dependency graph) across a clusters of dumb workers.

Source: http://horicky.blogspot.com/2010/10/scalable-system-design-patterns.html

Misconceptions About Software Architecture

References to architecture are everywhere: in every article, in every ad. And we take this word for granted. We all seem to understand what it means. But there isn't any wellaccepted definition of software architecture. Are we all understanding the same thing? We gladly accept that software architecture is the design, the structure, or the infrastructure. Many ideas are floating around concerning why and how you design or acquire an architecture and who does it. Here are some of the most common misconceptions about Software Architecture.

Architecture and design are the same thing

Architecture is design, but is not all of the design. Architecture is about making decisions on how the system will be built, it stops at the major abstractions, the major elements - the elements that are structurally important, but also those that have a more lasting impact on the performance, reliability, cost, and adaptability of the system. In constrast, design involves a lot more in order to take the design to implementation. In a word, architecture is the fundamental, architecturally-significant aspects of the design.

Architecture and infrastructure are the same thing

The infrastructure is an integral and important part of the architecture: It is the foundation. Choices of platform, operating systems, middleware, database, and so on, are major architectural choices. Architecture must include the application architecture plus the infrastructure architecture. There is far more to architecture than just the infrastructure. The architects have to consider the whole system, including all applications otherwise an overly narrow view of what architecture is may lead to a very nice infrastructure, but the wrong infrastructure for the problem at hand.

Architecture is just a structure

Architecture is more than just the structural organization of design elements, it also includes the description of how these elements collaborate, as well as why things are the way they are (the rationale). The architecture must describer how the architecture fits into the current business context and must address how it can be developed within the current development context.

Architecture is flat and one blueprint is enough

Architecture is a complex beast; it is many things to many different stakeholders. Using a single blueprint to represent architecture results in an unintelligible semantic mess. Like building architects who have floor plans, elevations, electrical cabling diagrams, and so on, we need multiple blueprints to address different concerns, and to express the separate but interdependent structures that exist in an architecture.

Architecture cannot be measured or validated

Architecture is not just a whiteboard exercise that results in a few interconnected boxes and is then labeled a high-level design. The development of the architecture involves design, implementation, testing, etc. There are many aspects you can validate by inspection, systematic analysis, simulation, or modelization.

Architecture is Science

If there is a spectrum between art (creative) and science (prescriptive), architecture is somewhere in-between. The architecture problem space is quite large. One of the reasons that architecture cannot yet be considered a science is that there are no good guidelines on how to traverse the solution space, "prune it", and then apply the selected solutions. Architecture is becoming an engineering discipline (it is steadily moving toward the science end of the spectrum). Nevertheless, it is not a strict science because if you put two architect in seperate rooms, give them just the requirements, each will (most probably) come up with a different architecture. However, if you constrain them with a set of architectural patterns, their results will be more similar.

Architecture is an art

Let's not fool ourselves. The artistic, creative part of software architecture is usually very small. Most of what architects do is copy solutions that they know worked in other similar circumstances, and assemble them in different forms and combinations, with modest incremental improvements. It is possible to describe an architectural process that has precise steps and prescribed artifacts, and that takes advantage of heuristics and patterns that are starting to be better understood.

Two powerful principles to improve the design

Coupling is usually contrasted with cohesion. Low coupling often correlates with high cohesion, and vice versa.

Low coupling is often a sign of a well-structured computer system and a good design, and when combined with high cohesion, supports the general goals of high readability and maintainability.

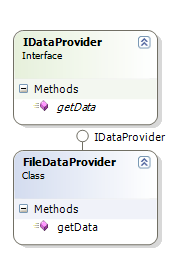

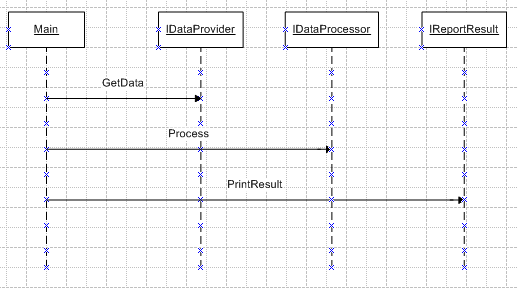

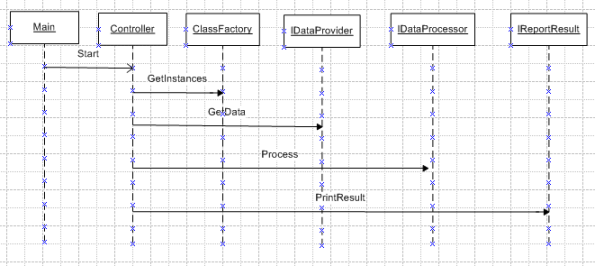

The goal of this case study is to show the benefits of low coupling and high cohesion, and how it can be implemented with Java. The case study consists of designing an application that accesses a file in order to get data, processes it, and prints the result to an output file.

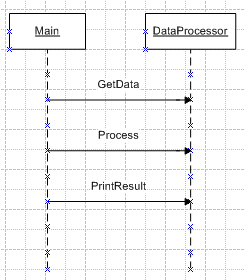

Solution without design:

For this first solution the design is ignored and only one class named DataProcessor is used to:

- Get data from file.

- Processing data.

- Print result.

And the main method invokes the methods of this class.

I. Usability

This is the definition of the usability attributes that are required from the business. This addresses the complexity/simplicity of use.

II. Modifiability

This attribute defines the maintainability and modifiability of this feature/functionality. Considerations to the ease of making changes all the way to the availability of resources with the technical knowledge to support the feature/functionality are addressed here.

III. Performance

This attributes defines the performance qualities for this feature/functionality. Response times, processing times, etc. should be considered.

IV. Availability

This attribute defines what qualities pertain to how available this feature/functionality must be to users and other systems. Failover, recovery times, etc. would be considered here.

V. Security

This attribute defines the security qualities that define this feature/functionality. Elements as in protecting access, data, etc. should be considered.

VI. Testability

This attribute defines the quality attributes for testing this feature/function. Ease of testing – including Unit as well as QA are considered. Special attention to quality controls like test automation, heartbeats, dashboards that self test are also included in these considerations.

VII. Scalability

This attribute defines how this feature/functionality scales. Overall capacity should be considered at this point including future growth.

VIII. Portability

This attribute defines the portability of this functionality – Cross Plattform functionality as well as maintaining a light interdependence on common vendor functdionality (i.e.WebServers: JBoss vs Weblogic &/or WebSphere).

IX. Business Qualities

A. Time

This attribute defines the time constraints to deliver this functionality..

B. Cost (CBA)

This attribute defines the cost benefit of this functionality.

C. Market

This attribute defines the market segmentation of this functionality. Since we aren’t really managing to a Product Line, I’m OK with Omitting this in our definitions of architecture.

D. Integration

This attribute discusses the system integration of this functionality to other systems

E. Lifetime

This attribute defines the expected time this product is expected to exist, iterations that are expected to impact it, etc.

The 4 building blocks of Architecting Systems for Scale

A quick gloss on the building blocks:

- Load Balancing: Scalability & Redundancy. Horizontal scalability and redundancy are usually achieved via load balancing, the spreading of requests across multiple resources.

- Smart Clients. The client has a list of hosts and load balances across that list of hosts. Upside is simple for programmers. Downside is it's hard to update and change.

- Hardware Load Balancers. Targeted at larger companies, this is dedicated load balancing hardware. Upside is performance. Downside is cost and complexity.

- Software Load Balancers. The recommended approach, it's software that handles load balancing, health checks, etc.

- Caching. Make better use of resources you already have. Precalculate results for later use.

- Application Versus Database Caching. Databases caching is simple because the programmer doesn't have to do it. Application caching requires explicit integration into the application code.

- In Memory Caches. Performs best but you usually have more disk than RAM.

- Content Distribution Networks. Moves the burden of serving static resources from your application and moves into a specialized distributed caching service.

- Cache Invalidation. Caching is great but the problem is you have to practice safe cache invalidation.

- Off-Line Processing. Processing that doesn't happen in-line with a web requests. Reduces latency and/or handles batch processing.

- Message Queues. Work is queued to a cluster of agents to be processed in parallel.

- Scheduling Periodic Tasks. Triggers daily, hourly, or other regular system tasks.

- Map-Reduce. When your system becomes too large for ad hoc queries then move to using a specialized data processing infrastructure.

- Platform Layer. Disconnect application code from web servers, load balancers, and databases using a service level API. This makes it easier to add new resources, reuse infrastructure between projects, and scale a growing organization.

In this post I'll attempt to document some of the scalability architecture lessons learned while working on systems at Yahoo! and Digg.

I've attempted to maintain a color convention for diagrams in this post:

- green represents an external request from an external client (an HTTP request from a browser, etc),

- blue represents your code running in some container (a Django app running on mod_wsgi, a Python script listening to RabbitMQ, etc), and

- red represents a piece of infrastructure (MySQL, Redis, RabbitMQ, etc).

Load Balancing: Scalability & Redundancy

The ideal system increases capacity linearly with adding hardware. In such a system, if you have one machine and add another, your capacity would double. If you had three and you add another, your capacity would increase by 33%. Let's call this horizontal scalability.

On the failure side, an ideal system isn't disrupted by the loss of a server. Losing a server should simply decrease system capacity by the same amount it increased overall capacity when it was added. Let's call this redundancy.

Both horizontal scalability and redundancy are usually achieved via load balancing.

(This article won't address vertical scalability, as it is usually an undesirable property for a large system, as there is inevitably a point where it becomes cheaper to add capacity in the form on additional machines rather than additional resources of one machine, and redundancy and vertical scaling can be at odds with one-another.)

Load balancing is the process of spreading requests across multiple resources according to some metric (random, round-robin, random with weighting for machine capacity, etc) and their current status (available for requests, not responding, elevated error rate, etc).